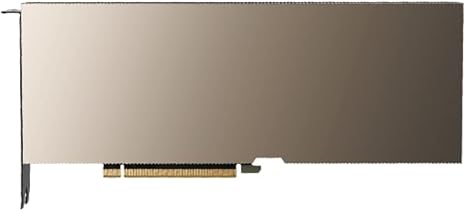

NVIDIA A100 80GB Tensor Core GPU

About the Product

- Powered by advanced Ampere architecture for high-performance computing

- Delivers exceptional performance for AI training and inference workloads

- Equipped with 80GB HBM2e memory for handling massive datasets

- Ultra-fast memory bandwidth up to 2 TB/s for accelerated processing

- Supports Multi-Instance GPU (MIG) for workload partitioning

- Optimized for data centers, cloud computing, and enterprise environments

- Compatible with NVLink/NVSwitch for scalable multi-GPU setups

- Reliable performance for mission-critical and compute-intensive applications

A100 80GB Graphics Card – 80 GB HBM2e ECC – Bulk Packaging and Accessories VCI

Product Overview

The NVIDIA A100 80GB Tensor Core GPU is a powerful data center accelerator engineered to deliver exceptional performance for artificial intelligence (AI), deep learning, and high-performance computing (HPC) workloads. Built on the advanced Ampere architecture, it provides unmatched processing capabilities, enabling organizations to accelerate complex computations, large-scale data analytics, and next-generation AI applications.

With its massive 80GB of high-bandwidth HBM2e memory, the A100 allows users to handle extremely large datasets and train sophisticated machine learning models with ease. Its third-generation Tensor Cores deliver breakthrough performance for both training and inference, significantly reducing processing time and improving efficiency across demanding workloads.

Designed for scalability and flexibility, the A100 supports Multi-Instance GPU (MIG) technology, allowing a single GPU to be partitioned into multiple independent instances. This makes it ideal for multi-user environments and cloud deployments, maximizing resource utilization and operational efficiency.

Whether deployed in enterprise data centers or cloud infrastructures, the NVIDIA A100 80GB ensures reliable, high-throughput performance for mission-critical applications, making it a leading choice for AI innovation and advanced computing.

Technical Specifications

| Brand | NVIDIA |

|---|---|

| Product Name | NVIDIA A100 Tensor Core |

| GPU architecture | NVIDIA Ampere |

| GPU memory | 80GB |

| CUDA Cores | 6912 |

| Streaming Multiprocessors | 108 |

| Tensor Cores | Gen 3 | 432 |

| FP64 | 9.7 TFLOPS |

| FP64 Tensor Core | 19.5 TFLOPS |

| FP32 | 19.5 TFLOPS |

| GPU Memory | 80GB HBM2e |

| GPU Memory Bandwidth | 1,935 GB/s |

| Max Thermal Design Power (TDP) | 300W |

| NVLink | 2-Way, 2-Slot, 600 GB/s Bidirectional |

| Multi-Instance GPU | Up to 7 MIGs @ 10GB |

| Form Factor | PCIe Dual-slot air-cooled or single-slot liquid-cooled |

| Graphics bus | PCIe 4.0 x16 |

| Thermal solution | Passive |

| vGPU Support | NVIDIA Virtual Compute Server (vCS) |

What Makes It Special

1. AI & Deep Learning Powerhouse

- Handles very large models (like GPT-type models)

- Up to 20× performance improvement over older GPUs

2. Massive Memory

- 80GB VRAM allows:

- Training huge datasets

- Running large AI models without splitting

3. Scalability

- Works with:

- NVLink / NVSwitch

- Multi-GPU clusters (even thousands of GPUs)

4. Partitioning (MIG)

- One GPU can serve multiple users or tasks efficiently

Typical Use Cases

- AI model training (ChatGPT-like systems)

- Data science & analytics

- Cloud computing (AWS, Azure, Google Cloud)

- Scientific research (physics, genomics)

- Enterprise workloads

❗ Important Notes

- ❌ Not suitable for gaming PCs

- ❌ No display outputs (most models)

- 💰 Very expensive (often $10,000+ depending on version)

- 🖥️ Requires specialized servers, not normal desktops

A100 vs Consumer GPUs (Simple Insight)

- Compared to RTX GPUs:

- Less focused on graphics

- More focused on AI computation + memory

- Still widely used even today because of its large memory and strong AI ecosystem

Bottom Line

The A100 80GB is a professional AI accelerator, not a regular GPU. It’s built for organizations, research labs, and cloud providers that need extreme computing power.

Reviews

There are no reviews yet.